To the casual consumer, photogrammetry and AR are words that they have probably encountered before but might not necessarily understand. For example, they might have used an app like Layar with augmented reality (AR) capabilities, or seen a virtual landscape made with photogrammetry and not been aware of the technologies used to make them.

Though AR and photogrammetry might not be part of a typical consumer’s lexicon, they are gaining prominence every year. In fact, when they are used in tandem, spectacular results are being achieved. The goal of our article is to examine the role of photogrammetry in AR and how you can leverage the technology in your next augmented reality application.

Program-Ace is a leading AR app development company with more than 10 year of experience in AR app creation

What do AR and Photogrammetry Have in Common?

Augmented reality is a technology that uses a digital interface (usually the screen of a smartphone or gadget) to display virtual models of objects and entities layered on top of the real-world surroundings of the user. For example, a user who wants to see how their room would look like with their favorite comic book characters assembled inside it will need a device and AR application on it. In turn, the app will need to contain the 3D models of the characters in question. This is where photogrammetry (PG) comes in.

Building 3D models with photos

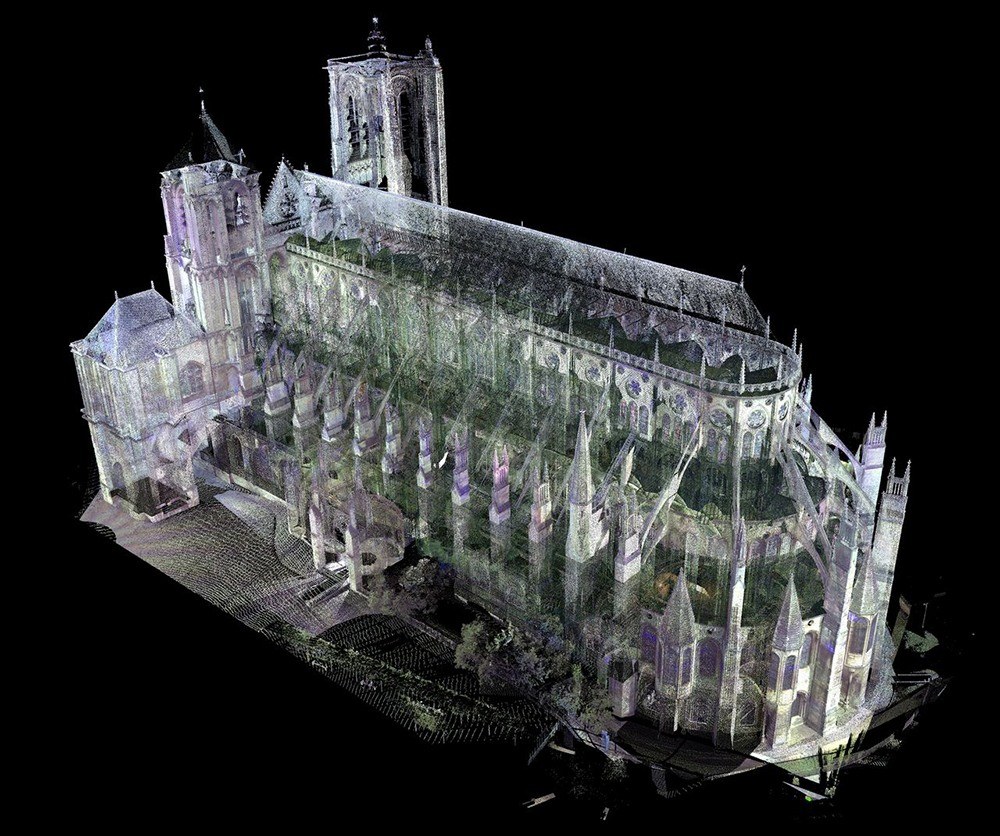

There are 3 main methods for creating the 3D models used in AR, and PG is one of them. With PG, a series of photographs of a single object or location are collected, analyzed with software, and converted into accurate 3D models. The shots can be done at long range (aerial shots) to visualize large areas and structures, as well as close range to get objects in their full texture and volume.

Building 3D models with laser scanners and creativity

Of the other two methods, only 3D scanning provides accuracy comparable to PG. In contrast, the custom modeling method does not require any measurements, and you are free to create any models you like. However, accuracy will only be possible if you build based on some empirical data and measurements.

Why is Photogrammetry Good for AR?

Of the 3 modeling methods used for AR apps, PG has several distinct advantages:

- Cost

- Accuracy

- Few restrictions

- Speed

Long gone are the days when taking photographs was a luxury reserved for the rich. Nowadays, nearly everyone has a camera on their phone or knows someone with a high-quality camera. Thus, you can easily take the needed pictures without spending a dime, unless there is a need to take the shots from an airplane or drone. This is superior to the scanning method, which requires special equipment that typically costs thousands or tens of thousands of dollars.

When you build a custom model for AR, you will usually rely on a general understanding and memory of how an object or setting looks to build its digital equivalent. You may use personal estimates of its proportions or use another project of its kind as an example. Even if you manage to get close to the real measurements of your target, the result will not be precise. As you probably know, precise 3D models are essential in industries like construction and retail, so any deviation from reality can lead to catastrophe.

Speaking about the restrictions of what can be modeled with this technique, the sky’s the limit. Or actually, the sea’s the limit. Photos of nearly anything can be taken and turned into assets for software, from the coffee table in your home to a distant comet flying through space. In fact, this technique was used to give us some of the first rotatable and modifiable visualizations of Earth and planets. Unfortunately, there are some settings (e.g. underwater or underground) that cannot be captured in this way, but beyond that, you can let your imagination run wild.

Not only does it take a short time to take pictures for conversion to 3D, but the conversion process also tends to be quite fast. With the proper image parameters, you should be able to create a functional model in a matter of hours. On the other hand, it can take days to create a custom asset from scratch, toiling away at a computer. AR development is already a lengthy process, so this is the kind of efficiency that developers dream of.

How is Photogrammetry Integrated into AR?

The concept of PG is quite simple, but in practice, there are more steps to the process of adding it to an AR app than you might expect.

1. Getting the references

Every AR application uses data to display objects, and according to this modeling technology, the data comes from photos. Usually, at least 3 pictures of an object are taken from multiple angles and heights. It is not uncommon for two or more cameras to be used during the process.

2. Converting data

After the needed photos are taken, you cannot just upload them into your AR app data and call it a day. They must be properly converted, and this can be achieved with special software. Thus, the program analyzes the photos and establishes their spatial dimensions, positions, and other details needed to recreate the objects in three dimensions. Thus, a 2D photo is transformed into a three-dimensional mesh – a patchwork of tiny pieces connected to form the asset and its various elements.

3. Uploading and configuring the assets

After building a 3D asset for your app, you still have to make sure that it is configured properly for the software, preferably before adding it to the folders of your augmented reality program. For example, you must make sure that the file size is not so great that it will make the whole app too big or slow down everything in it. It must fit into the world of your application perfectly, with all of the interactive and mechanical features you envisioned. The initial images might have been static, but you have the freedom to make the asset move and be a part of an animation, among a range of other customizations.

What do You Need to Build an AR Application with Photogrammetry?

As is the case with any digital application, you will need special software first and foremost. AR apps are typically built for mobile devices, though some are available for desktop or web. Thus, you will need the right programs to deploy your software on your platform of choice. At the very least, you will need an IDE or some sort of digital notepad where your code will be stored, but it will be hard to get by without other specialized software.

AR software

For example, you might want to take advantage of the tools provided by popular engines like Unity, Unreal Engine, Vuforia, and ARKit. They will help you save a great amount of development time and make the experience convenient for you. This is the approach that the overwhelming majority of software companies use.

Photogrammetry software

These programs will be essential in converting your photos’ visual information into digital data and assets for your app. The most popular programs in this category include:

Other requirements

Beyond software, you will also need proper hardware for various stages of the project. For example, you will need a camera to take the required pictures, a computer to run asset creation software on and write code, as well as mobile devices if you plan to test your app on mobile platforms like Android and iOS. In case your program will have any networking capability, you may also need hosting/cloud services to handle the influx of queries from users.

Finally, you will need a good team of developers and other specialists to help you build your project, unless you want to do the work alone. A typical team will include multiple developers, designers, QA engineers, and at least one project manager. If you are short on staff, you can also look for a dedicated team that will cover all gaps in your talent pool.

A Real-Life Example of Photogrammetry Integration into AR

For a real example of PG being used to make an AR application better, look no further than FurnitARe. This application was developed by Program-Ace, and came about as a result of our work with photogrammetry. We used this technology to build accurate 3D assets of various furniture types, which eventually became core components in our app.

One of the issues we faced right away was a high polygon count. The assets were excessively detailed, which increased file size and slowed down performance. Fortunately, the issue was circumvented through a solution known as retopology – reducing the assets’ polygon count while maintaining their optimum appearance. The team successfully updated the assets in programs like 3DS Max, Maya, and ZBrush, and eventually integrated them successfully into the AR program.

As a result of this approach, we reduced the production costs and time typically associated with products of this caliber, and also optimized asset performance to the point where they functioned flawlessly in animation. We are certain that any other approach (custom model design or scanning) would have incurred substantial additional costs or set us behind our planned timeframes.

Augmented Reality Development Services

Developing an AR application can seem like a colossal undertaking to businesses who have never worked with the technology before, but a development partner like Program-Ace can come to the rescue. Whether you choose to use photogrammetry or another approach for your 3D assets, our specialists are more than ready to build them, along with the app interface, mechanics, and complete package, depending on your needs.

Over the 26+ years of our company’s existence on the market, we have spent a majority working with AR/MR/VR and building programs like the aforementioned FurnitARe. We know our stuff, and you can count on us to deliver work of the highest quality and in the tightest timeframes.

To start discussing our cooperation on your project today, just contact us.